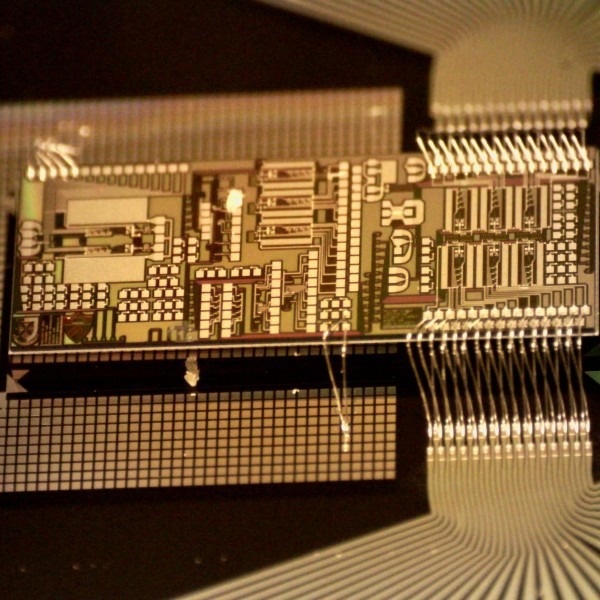

A picture of the chip used for this work. Image Credit: The George Washington University/Queens University.

A picture of the chip used for this work. Image Credit: The George Washington University/Queens University.

On a yearly basis, machine learning applications skyrocketed to $165B, per a recent McKinsey report.

Training for modern-day artificial intelligence (AI) systems similar to Tesla’s autopilot costs millions of dollars in electrical power consumption and needs supercomputer-like infrastructure. An ever-widening gap between demand for AI and computer hardware is left by this surging AI “appetite.”

Photonic integrated circuits, or optical chips, have evolved as a possible solution to provide greater computing performance, as quantified by the operations executed per second per watt utilized (TOPS/W).

Although they have illustrated enhanced core operations in machine intelligence that have been utilized for data classification, photonic chips have yet to increase the real front-end learning and machine training process.

The Solution

As far as machine learning is concerned, it is a two-step procedure. Initially, data has been utilized for training the system, and other data is utilized to test the AI system’s performance. In a new study performed, a research group from George Washington University, Queens University, University of British Columbia, and Princeton University have commenced doing just that.

Following one training step, the research group noted an error. It reconfigured the hardware for a second training cycle accompanied by extra training cycles until enough AI performance was obtained (for example, the system has been able to label objects appearing in a movie properly).

So far, photonic chips have only illustrated a potential to categorize and infer information from data. Currently, scientists have made it possible to expedite the training step itself.

This added AI capability is considered part of a bigger measure around photonic tensor cores and other electronic-photonic application-specific integrated circuits (ASIC) that tend to leverage photonic chip manufacturing for machine learning and AI applications.

From the Researchers

“This novel hardware will speed up the training of machine learning systems and harness the best of what both photonics and electronic chips have to offer. It is a major leap forward for AI hardware acceleration. These are the kinds of advancements we need in the semiconductor industry as underscored by the recently passed CHIPS Act,” stated Volker Sorger, Professor of Electrical and Computer Engineering at the George Washington University and founder of the start-up company Intelligence.

The training of AI systems costs a significant amount of energy and carbon footprint. For example, a single AI transformer takes about five times as much CO2 in electricity as a gasoline car spends in its lifetime. Our training on photonic chips will help to reduce this overhead.

Bhavin Shastri, Assistant Professor, Physics Department, Queens University

Journal Reference:

Filipovich, M. J., et al. (2022) Silicon photonic architecture for training deep neural networks with direct feedback alignment. Optica. doi.org/10.1364/OPTICA.475493.