By Megan DaviesNov 7 2019

By Megan DaviesNov 7 2019The cyber-physical systems and Internet of Things (IoT) have unlocked several possibilities for smart homes and smart cities, besides transforming the lives of people. In this smart era, remote monitoring of people in everyday life using radio-frequency probe signals is more and more necessary.

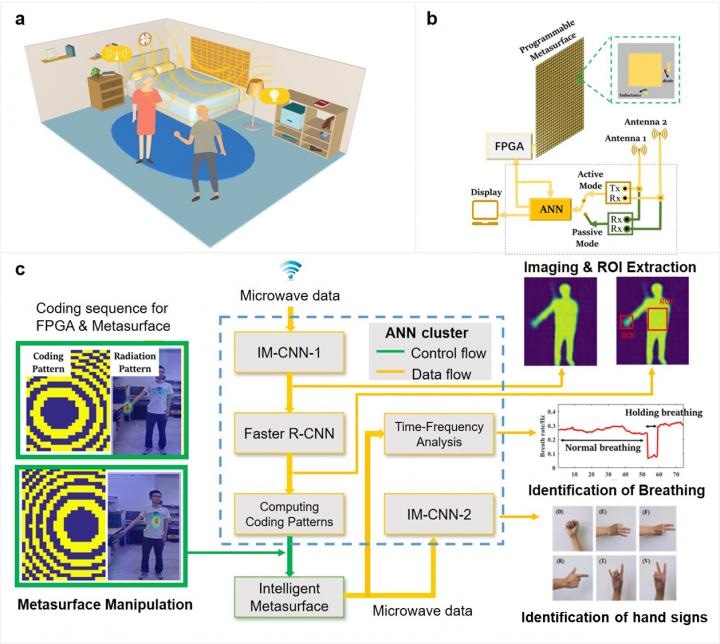

An illustrative scenario for monitoring peoples in a typical indoor environment in a smart, real-time and inexpensive way. (b) The schematic configuration of intelligent metasurface system. (c) Microwave data processing flow by using deep learning CNNs. Image Credit: Lianlin Li, Ya Shuang, Qian Ma, Haoyang Li, Hanting Zhao, Menglin Wei, Che Liu, Chenglong Hao, Cheng-Wei Qiu, and Tie Jun Cui.

An illustrative scenario for monitoring peoples in a typical indoor environment in a smart, real-time and inexpensive way. (b) The schematic configuration of intelligent metasurface system. (c) Microwave data processing flow by using deep learning CNNs. Image Credit: Lianlin Li, Ya Shuang, Qian Ma, Haoyang Li, Hanting Zhao, Menglin Wei, Che Liu, Chenglong Hao, Cheng-Wei Qiu, and Tie Jun Cui.

But traditional sensing systems cannot be deployed in real-world settings. This is because they generally necessitate objects to either intentionally cooperate or carry an active wireless device or identification tag. Moreover, the current sensing systems are not programmable or adaptive to certain tasks. Therefore, they are not efficient from various points of view, such as the consumption of energy and time.

In a new study reported in Light Science & Application, researchers from the State Key Laboratory of Advanced Optical Communication Systems and Networks, Department of Electronics, Peking University, China; the State Key Laboratory of Millimeter Waves, Southeast University, China; and their colleagues created an AI-powered smart metasurface for collaboratively manipulating the EM waves on the physical level and the EM data flux on the digital pipeline.

Using the metasurface as the basis, they developed a low-cost intelligent EM “camera,” which offers powerful performance in achieving instantaneous in-situ imaging of full scene and adaptive recognition of the vital signs and hand signs of a number of non-cooperative people.

The most fascinating fact is that the EM camera functions efficiently even if it is passively excited by stray 2.4 GHz Wi-Fi signals that are omnipresent in everyday life. In essence, the intelligent camera enables users to remotely “see” people’s actions, track how changes occur in their physiological states, and “hear” what people are talking without the need to fit any acoustic sensors, even if the people are non-cooperative and are behind obstacles.

The method and technique reported in the study will pave new ways for future smart homes, smart cities, health monitoring, human-device interactive interfaces, and safety screening, without any visual privacy problems.

The intelligent EM camera is based on a smart metasurface, or a programmable metasurface driven by a cluster of artificial neural networks (ANNs). The metasurface can be controlled to produce the intended radiation patterns corresponding to various sensing tasks, such as imaging, data acquisition, and automatic recognition.

It can support different types of successive sensing tasks in real time using a single device. The researchers outline the operational principle of their camera:

“We design a large-aperture programmable coding metasurface for three purposes in one: (1) to perform in-situ high-resolution imaging of multiple people in a full-view scene; (2) to rapidly focus EM fields (including ambient stray Wi-Fi signals) to selected local spots and avoid undesired interferences from the body trunk and ambient environment; and (3) to monitor the local body signs and vital signs of multiple non-cooperative people in real-world settings by instantly scanning the local body parts of interest.”

“Since the switching rate of metasurface is remarkably faster than that of body changing (vital sign and hand sign) by a factor of ~ , the number of people monitored in principle can be very large,” they added.

“The presented technique can be used to monitor the notable or non-notable movements of non-cooperative people in the real world but also help people with profound disabilities remotely send commands to devices using body languages. This breakthrough could open a new venue for future smart cities, smart homes, human-device interactive interface, healthy monitoring, and safety screening without causing privacy issues,” the researchers predict.

This study was supported by the National Key Research and Development Program of China, the National Natural Science Foundation of China, and the 111 Project.