Aug 2 2016

When it comes to parking assistance or detecting pedestrians, modern cars can analyze their environment with high precision. But so far, what’s going on inside the car is less analyzed. Not so with a new type of system: it recognizes the number and size of the people inside the car and where they are focusing their attention. In this way, it provides the basis for novel assistance systems, such as those for autonomous driving.

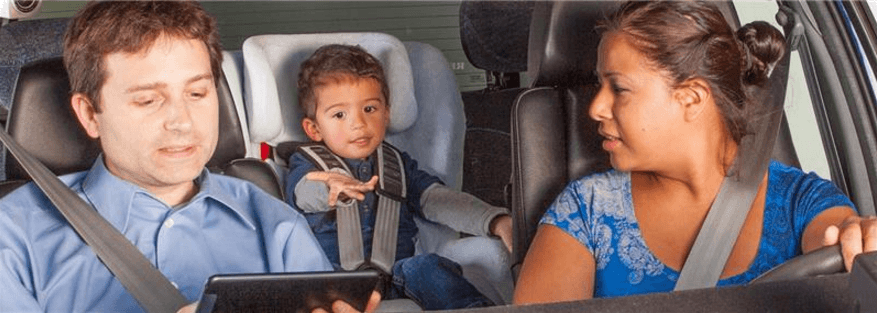

Assistance systems will soon be able to detect what vehicle occupants are doing – an important feature for autonomous driving, for example. (© Photo Fraunhofer IAO)

Assistance systems will soon be able to detect what vehicle occupants are doing – an important feature for autonomous driving, for example. (© Photo Fraunhofer IAO)

Eyes firmly on the road, ready to react – this is how motorists usually sit behind the wheel. But this could change once cars can steer and brake automatically: the drivers can lean back and relax, send a message on their smartphone, turn to the children in the back seat, or temporarily enjoy an expanded infotainment program. In this vision of autonomous driving, drivers can use their travel time for activities besides driving; this puts new demands on assistance systems. Despite the numerous sensors available for analyzing a car’s environment, similar knowledge is needed for the interieur in order to realize more intuitive interieur and interaction experience.

Basis for the new assistance systems

Researchers at the Fraunhofer Institutes for Optronics, System Technologies and Image Exploitation IOSB in Karlsruhe and for Industrial Engineering IAO in Stuttgart are collaborating with their colleagues at Volkswagen Group Research, Bosch, Visteon and other companies as part of the “Intelligent Car Interior” project, or InCarIn for short. Together, they are developing a system designed for the vehicle interior. The project is funded by the German Federal Ministry of Education and Research (BMBF). “We are expanding sensor technology to the entire interior,” says Dr. Michael Voit, group manager at Fraunhofer IOSB. “Using depth-perception cameras, we capture the vehicle’s interior, identify the number of people, their size and their posture. From this we can deduce their activities.”

The long-term goal is to build new assistance systems. Among other applications, this is important for partially autonomous driving situations. For example, say the driver needs to turn around to the children sitting in the back seat. The system could display a video image of the back seat, so that the driver can keep their eyes on the road yet still see what the children are up to.

“Using the sensors, the system can estimate how long the driver will need to resume full control of the vehicle following a period of autonomous driving,” says Frederik Diederichs, scientist and project manager at Fraunhofer IAO. Based on the information regarding where people are sitting and how big they are, airbags could also be adjusted to individual body sizes. And by analyzing the position of a passenger’s limbs, airbags could recognize special situations, such as when a passenger has their feet on the dashboard.

System recognizes people’s activities

The main challenge lies in evaluating the recorded data. The software can already detect people and their limbs, and a type of skeleton that overlays images of the people can also trace their movements. But how can the computer be taught to recognize what passengers are doing? “One challenge is to reliably identify the objects people are using. If one considers that in principle, any object could find its way into the vehicle, we must somehow limit the number of detectable possibilities. We therefore set basic parameters and tell the computer where the sun visor and glove compartment are, for example,” says Voit.

The researchers first tested and refined the cameras and the associated evaluation algorithms in Fraunhofer IAO’s own driving simulator. Next, the system will be integrated into a Volkswagen Multivan to see what it can do. The results will form the basis for new vehicle concepts for the next five to ten years.