Interest in mixed, augmented and virtual reality (MR/AR/VR) has increased in recent years, with an ever-expanding range of smart glasses, AR/MR industrial devices, and VR headsets being used. These systems are collectively called ‘XR,’ and the XR market is predicted to continue rapid expansion at a CAGR of 46% to 2025.1

To create these devices, engineers have employed a range of technologies, produced a wide array of optical systems, and applied several display types. Due to this, XR devices are highly diverse regarding function, architecture, and form, with designs that are tailored to meet a number of different application aims.

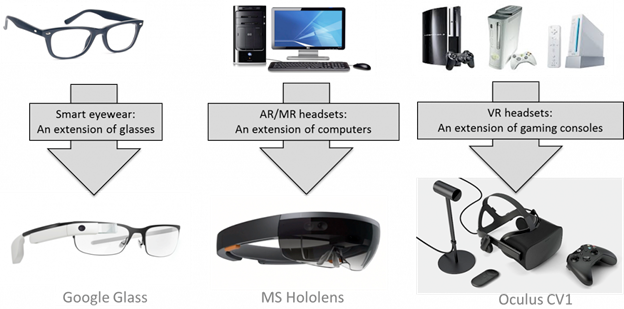

Generally speaking, “Three categories of head mounted displays have emerged: smart glasses, or digital eyewear, as an extension of eyewear; virtual reality headsets as an extension of gaming consoles; and augmented and mixed reality systems as an extension of computing hardware.”2

Three types of head-mounted displays (HMDs) and their antecedents. Image Source: Electro Optics

A pair of AR smart glasses, for example, designed for use by consumers, can be created to reduce hardware weight and size, normally employing smaller optical modules or displays that, in turn, reduce the display field of view (FOV).

Conversely, a VR headset developed for gaming may be engineered to enhance FOV and optimize visualization performance utilizing varifocal and/or high-resolution displays.

An MR headset designed for a military application may be engineered to increase functionality and durability, integrating with helmet hardware to securely and fully enclose the user’s head.

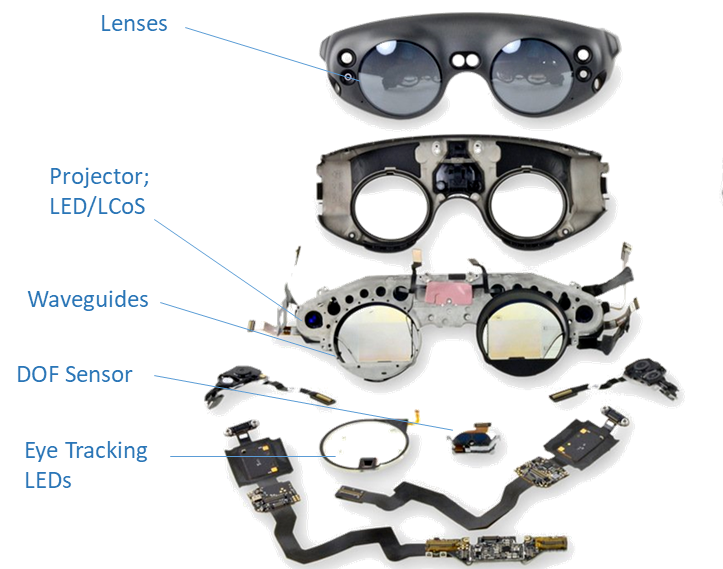

Some components are popular across nearly all XR devices while allowing them to provide the main aim of displaying digital images to the user.

Known as head-mounted devices (HMDs) or near-eye devices (NEDs), XR headsets normally feature a light engine, lenses, a power supply/battery, a display mechanism, input devices like sensors and a camera and a computational processor, even if the specifications of each element may be very different from one device to the next.

As an example, AR/MR devices frequently employ waveguides as a display combiner but can use an almost endless range of waveguide combinations, structures and configurations.

Optical components of the Magic Leap One MR device. Image Source: IFIXIT

After the main functional components, further elements can also be incorporated, such as audio speakers and microphones, USB ports, and touchpads or control buttons.

Cameras can provide photo capabilities for the user and can be utilized for device input. Several kinds of sensors can be included, including magnetometers, gyroscopes, humidity and altitude sensors, accelerometers, and near-infrared (NIR) 3D sensing emitters/receivers.

Hardware components of the Vuzix Blade smart glasses. Image Credit: Vuzix

AR/VR/MR Components

All of the hardware components in an XR device can face various performance, design and quality issues that must be addressed with careful testing and design.

The rest of this article will review some of the main optical elements of AR/MR smart glasses and the visual quality issues they may face.

The array of components in Microsoft’s HoloLens 2 MR glasses. Image Credit: Microsoft, Source

Computer Processor

The term “wearable computers” has been used to describe AR/MR devices, so, not surprisingly, the central processing unit (CPU) is an essential element. The computing unit is frequently located in one of the temples. The temples are the arms on each side that curve behind the user’s ears to keep the glasses in place.

The processor has to be very small to fit inside of the temple; for example, the same Qualcomm Snapdragon chipset in smartphones is utilized in Qualcomm’s Snapdragon™ XR1 platform.3

Lenses

As with regular glasses, smart glasses require lenses.

Numerous AR/MR glasses found on the market at present can be fitted with tailored lenses to match the prescription of the wearer, including ‘smart lenses’ which can modify their tint to become sunglasses based on ambient light conditions or can offer blue light filtering to be used with computer screens.

Ray Ban Stories smart sunglasses, which integrate with Facebook, are offered with different lens color choices and a prescription lens option. Image Credit: Ray Ban

Optics and Display Module

The picture generating unit (PGU) in AR/MR devices can feature a display module, which may be a projection system that employs LED/LCoS or lasers, or a microdisplay consisting of an OLED, MicroLED, or MicroOLED panel.

AR/MR devices additionally feature a combiner that integrates light rays from the ambient environment with digital images produced from the device to make the combined view seen by the user.

Waveguides and Lightguides

The most popular combiner for AR/MR devices are waveguides. Optical waveguides are thin pieces of plastic or glass that help to transmit and bend light.

A light field is propagated using the process of total internal reflection (TIR), where light is reflected between the inner and outer edges of the waveguide’s layers, and the digital and real-world images are combined into one.

The waveguide layer can have various geometric structures, such as polarized, reflective, holographic and diffractive. The physical features that help to control the light in these examples could be mirror arrays, prisms, metasurfaces or gratings, all taking various distinct forms.

While combiners successfully direct images and light into the user’s eyes, they can also produce undesirable effects such as distortion, double images, or diffraction effects like blurring or color separation, and the combiner’s visible edges may be noticeable.4

Adjustment and testing in the design process are required to mitigate these optical defects.

Close-up of the Magic Leap One smartglasses, which use a stack of waveguide layers (visible as slight striations on the square element in the center left of the lens). There are 6 waveguide layers - two for each color channel (red, green, blue). The two electronic elements on the right side of the lens are NIR LEDs for sensing. Image Credit: IFixit

XR Device Visual Quality Challenges

XR devices face a complicated range of quality testing challenges for device manufacturers and designers due to the wide array of component structures.

For example, the vast “number of sensors, and the technology associated with them, combined with the need to operate at low power and be lightweight, is an on-going challenge for the device builders.”5

The complexity of integrated optical, light and display systems establishes a need for advanced visual quality measurement and testing.

Various optical components from XR devices used to shape, bend, and transmit light to create images. Image Credit: Radiant Vision Systems

As AR/MR displays are transparent, they can present issues caused by the substrates (for example, plastic or glass), the creation of elements on substrate layers such as the nanostructures that support light guides, the curvature or shape of the lens, or by the lens’ layers and films (for example, anti-reflective tints or coatings).

For example, images can appear distorted, or be displayed with an offset shadow image, called ghosting. Ghosting is normally produced when the image reflects off both the back and front surfaces of the lens.

XR devices with varifocal, foveated and multi-focal optical designs and novel approaches, like liquid lens optics, only advance the complexity of visual quality measurement.

Another look at HoloLens 2 components, partially disassembled. Image Credit: Radiant Vision Systems

Issues such as irregular color (chromaticity) or brightness (luminance), distortion, rotation, poor contrast against the real-world backdrop and incorrect or incomplete shapes can also be found in AR/MR images.

It is a constant challenge for manufacturers of XR devices to solve all these quality considerations for XR devices with distinct optical characteristics.

Device manufacturers require various visual tests to quantify and test devices with varying specifications and form factors, such as angular FOV, resolution or focus.

Radiant Vision Systems offers constructive solutions for measuring and testing XR displays to verify that the visual experiences are high-quality, as shown from the wearer’s eye position in smart glasses and headsets.

ProMetric® Imaging Colorimeters and Photometers with up to 61MP resolution offer imaging precision, while Radiant’s TT-ARVR™ Software delivers rapid, automated sequencing of device tests.

Radiant provides XR optical test systems (for example, the AR/VR Lens) that record up to 120° H FOV for assessing fully immersive visual experiences and larger display areas.

The below image presents Radiant Vision Systems solutions for testing display modules, near-infrared sensors, headset architecture, lenses, and light guides, ensuring that display makers have end-to-end, adaptable visual testing abilities for all component phases of XR devices.

Image Credit: Radiant Vision Systems

References

- Augmented Reality and Virtual Reality Market by Technology and Geography – Forecast and Analysis 2021-2025, Technavio, June 2021.

- Kress, B., “Meeting the optical design challenges of mixed reality,” Electro Optics, January 31, 2019.

- Harfield, J., “How Do Smart Glasses Work?” Make Use Of, July 14, 2021.

- Guttag, K., “AR/MR Optics for Combining Light for a See-Through Display (Part 1),” KGOnTech, October 21, 2016

- Peddie, J., “Technology Issues.” In: Augmented Reality. Springer, Cham. 2017. DOI: https://doi.org/10.1007/978-3-319-54502-8_8

Acknowledgments

Produced from materials originally authored by Anne Corning from Radiant Vision Systems.

This information has been sourced, reviewed and adapted from materials provided by Konica Minolta Sensing Americas.

For more information on this source, please visit Konica Minolta Sensing Americas.